The Coordination of AI

Managing, Auditing, and Directing Your Virtual, Personal Workforce

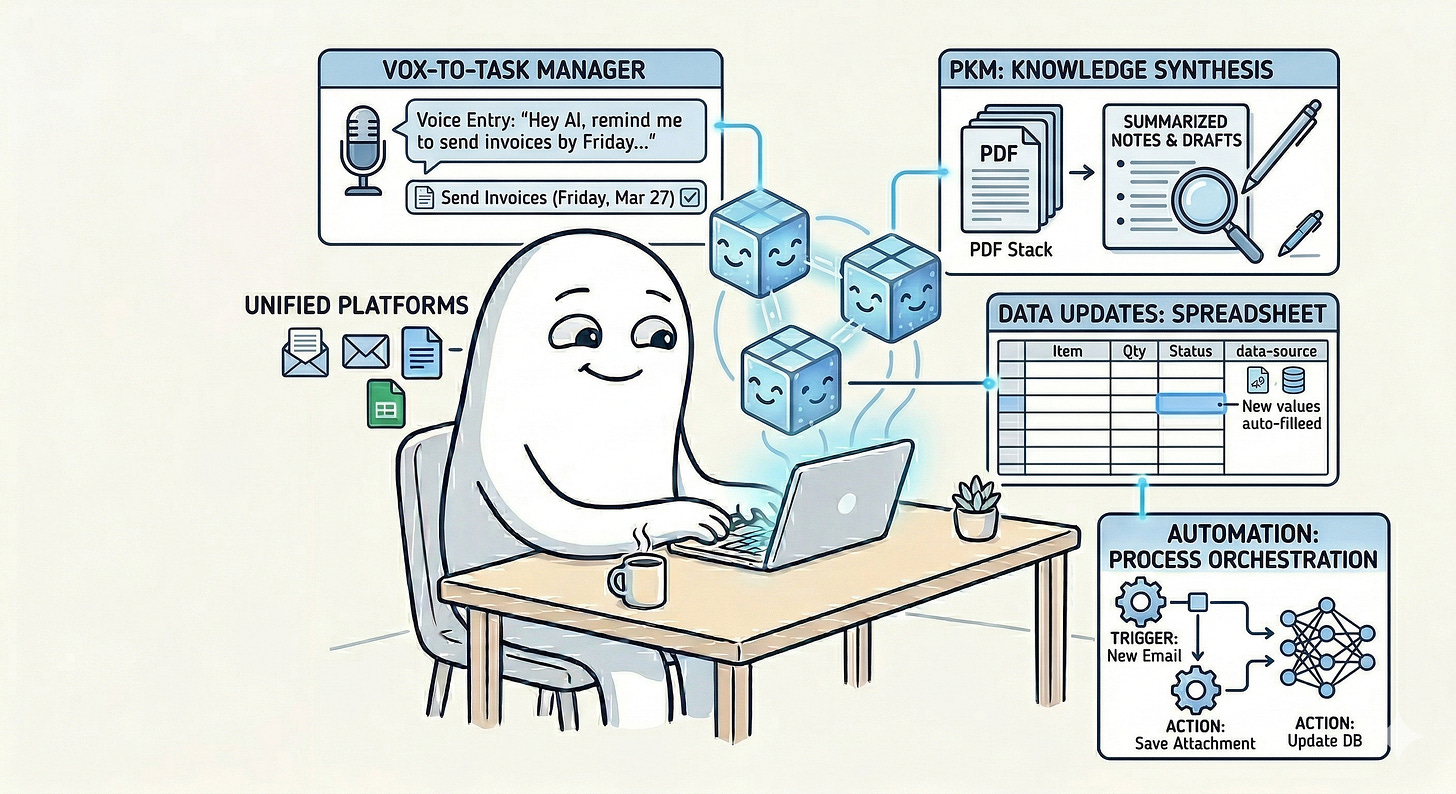

Artificial intelligence is steadily moving from being a single tool you open on demand to becoming a growing collection of tools, systems, assistants, and semi-autonomous agents that can help you get work done across your personal and professional life.

Up to this point in this series, I have written about several distinct ways AI is already changing per…